- Home

- Weddings

- Portraits

- Journal

- Contact

- Eso sip of health elder

- Watch silicon valley season 3 episode 3

- Salma agha husband manzar shah

- Digital video camera review

- Fusion 360 free for hobbyist

- Cardrecovery free serial key

- Overcooked 2 xbox

- Old odia movie

- Askeland 5th edition solutions

- Checkpoint vpn client windows 10 creators update

- The soap tv show

- Como instalar primavera p6 v7

- Nord vpn not connecting

- Birthright campaign setting pdf downlaod

- Payday 2 ultra low graphics mod

- Quarkxpress 2018 tutorial pdf

- Onenote gem windows 10

- Ozzy osbourne discography wikipedia

- Epsxe chrono cross compatibility

- Sekurosufia illusion games

- How to get full coptic reader version on iphone

- Nero 12 platinum extension

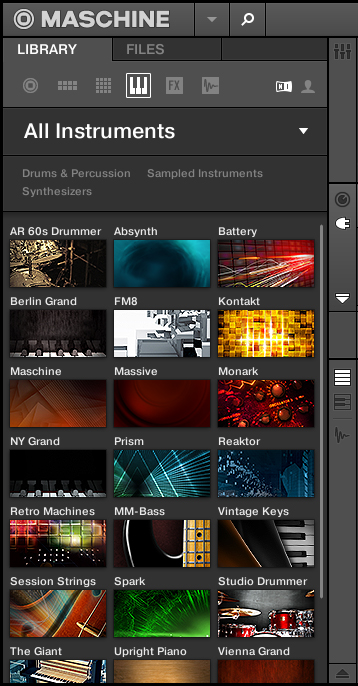

- Maschine library

- How to select a region in garageband 10-1

- Indian wedding dvd cover template psd free download

- Yu gi oh 5ds season 3 epsoide 4

- Jaya kishori bhajan live

- Skyrim special edition joy of perspective

- Library for proteus 8 professional

Working with web services at scale can be challenging due to networking issues, throttling, and large amounts of CPU downtime while the client waits on the server’s response.

#Maschine library full

See the full example notebook for more details. Figure 2: Distributed form recognition and translation in SynapseML extracts multi-lingual insights from unstructured collections of documents. These ready-to-use algorithms can parse a wide variety of documents, transcribe multi-speaker conversations in real time, and translate text to over 100 different languages. The latest release includes added support for distributed form recognition, conversation transcription, and translation, as illustrated in Figure 2. SynapseML enables developers to embed over 45 different state-of-the-art ML services directly into their systems and databases. Instead, SynapseML provides simple APIs for pre-built intelligent services, such as Azure Cognitive Services, to quickly solve large-scale AI challenges related to both business and research.

Many tools in SynapseML don’t require a large labelled training dataset. Getting started with state-of-the-art, pre-built intelligent models

Please see this GA announcement guide for added details. It even includes templates to quickly prototype distributed ML systems, such as visual search engines, predictive maintenance pipelines, document translation, and more. They can now build large-scale ML pipelines using Azure Cognitive Services, LightGBM, ONNX, and other selected SynapseML features. Developers who use Azure Synapse Analytics will be pleased to learn that SynapseML is now generally available on this service with enterprise support. Over the past five years, we have worked to improve and stabilize the SynapseML library for production workloads. General availability, and enterprise support on Azure Synapse Analytics

Figure 1: The simple API abstracts over many different ML frameworks, scales, computation paradigms, data providers, and languages. In addition to its availability in several different programming languages, the API abstracts over a wide variety of databases, file systems, and cloud data stores to simplify experiments no matter where data is located, as shown in Figure 1. It can also train and evaluate models on single-node, multi-node, and elastically resizable clusters of computers, so developers can scale up their work without wasting resources. This enables developers to quickly compose disparate ML frameworks for use cases that require more than one framework, such as web-supervised learning, search engine creation, and many others. It’s designed to help developers focus on the high-level structure of their data and tasks, not the implementation details and idiosyncrasies of different ML ecosystems and databases.Ī unified API standardizes many of today’s tools, frameworks, and algorithms, streamlining the distributed ML experience. SynapseML simplifies this experience by unifying many different ML learning frameworks with a single API that is scalable, data- and language-agnostic, and that works for batch, streaming, and serving applications. Moreover, these training systems aren’t designed to serve or deploy models, so separate inference and streaming architectures are required. Sure, frameworks like Horovod can manage this, but if a teammate wants to compare against a different ML framework, such as LightGBM, XGBoost, or SparkML, it requires a new environment and cluster. Finally, progress must be tracked to ensure resources are properly freed. If new computers join or leave the cluster, new worker machines must receive copies of the model, and data readers need to adapt to share work with the new machines and re-compute lost work. Then, data readers must coordinate to ensure that all data is queued for processing and that GPUs are at full capacity. The first step is to send a multi-GB model to hundreds of worker machines without overwhelming the network. For example, consider the distributed evaluation of a deep network. Writing fault-tolerant distributed programs is complex and a process that’s prone to errors. Simplifying distributed ML through a unified API